Populære emner

#

Bonk Eco continues to show strength amid $USELESS rally

#

Pump.fun to raise $1B token sale, traders speculating on airdrop

#

Boop.Fun leading the way with a new launchpad on Solana.

EigenPhi HQ 🎯 Wisdom of DeFi (🔭, 🎙) 🦇🔊

Enterprise AI-brukstilfeller er der verifisering ofte blir rotete. Men hvis du kan utnytte strukturerte logger, økonomisk intensjon eller agentatferd, kan du styrke signalet. La oss jobbe sammen for å bringe den verifiserbare atferden inn i modellopplæringsregimer.

Salesforce AI Research24. sep., 08:57

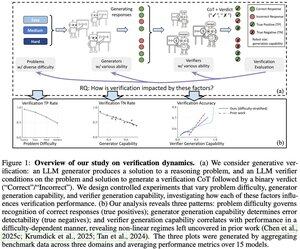

📣 Variasjon i verifisering: Forstå verifiseringsdynamikk i store språkmodeller

📄 Papir:

🔗 Prosjekt:

Har du noen gang lurt på om LLM-verifikatoren din faktisk er pålitelig for oppgaven din? Analyserammeverket vårt avslører tre nøkkelfaktorer som bestemmer verifiseringssuksess på tvers av problemvanskeligheter, generatorkapasitet og verifikatorkapasitet.

Viktig innsikt:

📈 Problemvanskeligheter gir riktig responsgjenkjenning - verifikatorer utmerker seg på enkle problemer, men sliter med vanskelige problemer

🔍 Generatorstyrke påvirker feildeteksjon - svake generatorer gir åpenbare feil, sterke skaper elegante, men feil løsninger

⚖️ Verifikatorskalering viser avtagende avkastning i visse regimer - noen ganger slår GPT-4o knapt mindre modeller

💡 For skalering i testtid: svake generatorer + verifisering kan matche sterke generatorers ytelse, og dyre verifikatorer er ikke alltid verdt det.

Flott arbeid av Yefan Zhou @LiamZhou98, Austin Xu @austinsxu, Yilun Zhou @YilunZhou, Janvijay Singh @iamjanvijay, Jiang Gui @JiangGui, Shafiq Joty @JotyShafiq!

#LLM #AIVerification #TestTimeScaling #FutureOfAI #EnterpriseAI

737

Kudos til TOOL-teamet 👏 Å heve Ethereum til en hyperskala co-prosessor er en game-changer. På vår side trives skaleringsinfrastruktur bare når den matches med transparente, reviderbare data om transaksjonsbehandling og prioritering. Uten dette åpner sluttferdighet med lav latens døren til sentralisering.

0xprincess24. sep., 22:26

1// Vi er stolte av å kunngjøre lanseringen av TOOL Testnet!

3,34K

Verifikatorloven er en flott linse, Jason. Nysgjerrig på hva du synes om domener som kryptografi eller kjedeposter – der verifisering er nesten gratis, men løsningskompleksiteten eksploderer? 💭🔐

Jason Wei16. juli 2025

New blog post about asymmetry of verification and "verifier's law":

Asymmetry of verification–the idea that some tasks are much easier to verify than to solve–is becoming an important idea as we have RL that finally works generally.

Great examples of asymmetry of verification are things like sudoku puzzles, writing the code for a website like instagram, and BrowseComp problems (takes ~100 websites to find the answer, but easy to verify once you have the answer).

Other tasks have near-symmetry of verification, like summing two 900-digit numbers or some data processing scripts. Yet other tasks are much easier to propose feasible solutions for than to verify them (e.g., fact-checking a long essay or stating a new diet like "only eat bison").

An important thing to understand about asymmetry of verification is that you can improve the asymmetry by doing some work beforehand. For example, if you have the answer key to a math problem or if you have test cases for a Leetcode problem. This greatly increases the set of problems with desirable verification asymmetry.

"Verifier's law" states that the ease of training AI to solve a task is proportional to how verifiable the task is. All tasks that are possible to solve and easy to verify will be solved by AI. The ability to train AI to solve a task is proportional to whether the task has the following properties:

1. Objective truth: everyone agrees what good solutions are

2. Fast to verify: any given solution can be verified in a few seconds

3. Scalable to verify: many solutions can be verified simultaneously

4. Low noise: verification is as tightly correlated to the solution quality as possible

5. Continuous reward: it’s easy to rank the goodness of many solutions for a single problem

One obvious instantiation of verifier's law is the fact that most benchmarks proposed in AI are easy to verify and so far have been solved. Notice that virtually all popular benchmarks in the past ten years fit criteria #1-4; benchmarks that don’t meet criteria #1-4 would struggle to become popular.

Why is verifiability so important? The amount of learning in AI that occurs is maximized when the above criteria are satisfied; you can take a lot of gradient steps where each step has a lot of signal. Speed of iteration is critical—it’s the reason that progress in the digital world has been so much faster than progress in the physical world.

AlphaEvolve from Google is one of the greatest examples of leveraging asymmetry of verification. It focuses on setups that fit all the above criteria, and has led to a number of advancements in mathematics and other fields. Different from what we've been doing in AI for the last two decades, it's a new paradigm in that all problems are optimized in a setting where the train set is equivalent to the test set.

Asymmetry of verification is everywhere and it's exciting to consider a world of jagged intelligence where anything we can measure will be solved.

870

Topp

Rangering

Favoritter