Argomenti di tendenza

#

Bonk Eco continues to show strength amid $USELESS rally

#

Pump.fun to raise $1B token sale, traders speculating on airdrop

#

Boop.Fun leading the way with a new launchpad on Solana.

EigenPhi HQ 🎯 Wisdom of DeFi (🔭, 🎙) 🦇🔊

I casi d'uso dell'AI aziendale sono quelli in cui la verifica spesso diventa complicata. Ma se riesci a sfruttare i log strutturati, l'intento economico o il comportamento degli agenti, puoi rafforzare il segnale. Lavoriamo insieme per portare quei comportamenti verificabili nei regimi di addestramento dei modelli.

Salesforce AI Research24 set, 08:57

📣 Variazione nella Verifica: Comprendere le Dinamiche di Verifica nei Modelli di Linguaggio di Grandi Dimensioni

📄 Documento:

🔗 Progetto:

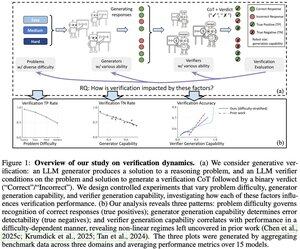

Ti sei mai chiesto se il tuo verificatore LLM sia realmente affidabile per il tuo compito? Il nostro framework di analisi rivela tre fattori chiave che determinano il successo della verifica in base alla difficoltà del problema, alla capacità del generatore e alla capacità del verificatore.

Principali intuizioni:

📈 La difficoltà del problema guida il riconoscimento delle risposte corrette - i verificatori eccellono nei problemi facili ma faticano con quelli difficili

🔍 La forza del generatore influisce sulla rilevazione degli errori - i generatori deboli producono errori evidenti, quelli forti creano soluzioni eleganti ma sbagliate

⚖️ La scalabilità del verificatore mostra rendimenti decrescenti in alcuni regimi - a volte GPT-4o supera appena i modelli più piccoli

💡 Per la scalabilità in tempo di test: generatori deboli + verifica possono eguagliare le prestazioni dei generatori forti, e i verificatori costosi non sempre valgono la pena.

Ottimo lavoro di Yefan Zhou @LiamZhou98, Austin Xu @austinsxu, Yilun Zhou @YilunZhou, Janvijay Singh @iamjanvijay, Jiang Gui @JiangGui, Shafiq Joty @JotyShafiq!

#LLM #AIVerification #TestTimeScaling #FutureOfAI #EnterpriseAI

741

Complimenti al team di TOOL 👏 Elevare Ethereum a un co-processore iperscalabile è un cambiamento radicale. Da parte nostra, l'infrastruttura di scalabilità prospera solo quando è abbinata a dati trasparenti e verificabili sul trattamento e la priorità delle transazioni. Senza questo, la finalità a bassa latenza apre la porta alla centralizzazione.

0xprincess24 set, 22:26

1// Siamo orgogliosi di annunciare il lancio del TOOL Testnet!

3,35K

La legge del verificatore è una grande lente, Jason. Sono curioso di sapere cosa ne pensi di domini come la crittografia o i registri on-chain, dove la verifica è quasi gratuita ma la complessità della soluzione esplode? 💭🔐

Jason Wei16 lug 2025

New blog post about asymmetry of verification and "verifier's law":

Asymmetry of verification–the idea that some tasks are much easier to verify than to solve–is becoming an important idea as we have RL that finally works generally.

Great examples of asymmetry of verification are things like sudoku puzzles, writing the code for a website like instagram, and BrowseComp problems (takes ~100 websites to find the answer, but easy to verify once you have the answer).

Other tasks have near-symmetry of verification, like summing two 900-digit numbers or some data processing scripts. Yet other tasks are much easier to propose feasible solutions for than to verify them (e.g., fact-checking a long essay or stating a new diet like "only eat bison").

An important thing to understand about asymmetry of verification is that you can improve the asymmetry by doing some work beforehand. For example, if you have the answer key to a math problem or if you have test cases for a Leetcode problem. This greatly increases the set of problems with desirable verification asymmetry.

"Verifier's law" states that the ease of training AI to solve a task is proportional to how verifiable the task is. All tasks that are possible to solve and easy to verify will be solved by AI. The ability to train AI to solve a task is proportional to whether the task has the following properties:

1. Objective truth: everyone agrees what good solutions are

2. Fast to verify: any given solution can be verified in a few seconds

3. Scalable to verify: many solutions can be verified simultaneously

4. Low noise: verification is as tightly correlated to the solution quality as possible

5. Continuous reward: it’s easy to rank the goodness of many solutions for a single problem

One obvious instantiation of verifier's law is the fact that most benchmarks proposed in AI are easy to verify and so far have been solved. Notice that virtually all popular benchmarks in the past ten years fit criteria #1-4; benchmarks that don’t meet criteria #1-4 would struggle to become popular.

Why is verifiability so important? The amount of learning in AI that occurs is maximized when the above criteria are satisfied; you can take a lot of gradient steps where each step has a lot of signal. Speed of iteration is critical—it’s the reason that progress in the digital world has been so much faster than progress in the physical world.

AlphaEvolve from Google is one of the greatest examples of leveraging asymmetry of verification. It focuses on setups that fit all the above criteria, and has led to a number of advancements in mathematics and other fields. Different from what we've been doing in AI for the last two decades, it's a new paradigm in that all problems are optimized in a setting where the train set is equivalent to the test set.

Asymmetry of verification is everywhere and it's exciting to consider a world of jagged intelligence where anything we can measure will be solved.

874

Principali

Ranking

Preferiti