Populaire onderwerpen

#

Bonk Eco continues to show strength amid $USELESS rally

#

Pump.fun to raise $1B token sale, traders speculating on airdrop

#

Boop.Fun leading the way with a new launchpad on Solana.

EigenPhi HQ 🎯 Wisdom of DeFi (🔭, 🎙) 🦇🔊

Enterprise AI-toepassingen zijn waar verificatie vaak rommelig wordt. Maar als je gestructureerde logs, economische intentie of agentgedrag kunt benutten, kun je het signaal versterken. Laten we samenwerken om die verifieerbare gedragingen in modeltrainingsregimes te brengen.

Salesforce AI Research24 sep, 08:57

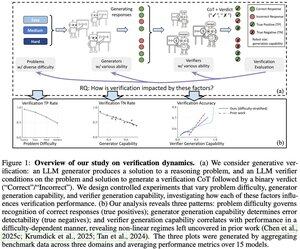

📣 Variatie in Verificatie: Begrijpen van Verificatiedynamiek in Grote Taalmodellen

📄 Paper:

🔗 Project:

Heb je je ooit afgevraagd of je LLM-verifier daadwerkelijk betrouwbaar is voor jouw taak? Ons analyseframework onthult drie belangrijke factoren die het succes van verificatie bepalen, afhankelijk van de moeilijkheidsgraad van het probleem, de capaciteit van de generator en de capaciteit van de verifier.

Belangrijke inzichten:

📈 De moeilijkheidsgraad van het probleem drijft de herkenning van correcte antwoorden - verifiers presteren goed op gemakkelijke problemen maar hebben moeite met moeilijke.

🔍 De kracht van de generator beïnvloedt de foutdetectie - zwakke generators produceren duidelijke fouten, sterke creëren elegante maar verkeerde oplossingen.

⚖️ Verifier-schaal toont afnemende rendementen in bepaalde regimes - soms verslaat GPT-4o nauwelijks kleinere modellen.

💡 Voor test-tijd schaling: zwakke generators + verificatie kunnen de prestaties van sterke generators evenaren, en dure verifiers zijn niet altijd de moeite waard.

Geweldig werk van Yefan Zhou @LiamZhou98, Austin Xu @austinsxu, Yilun Zhou @YilunZhou, Janvijay Singh @iamjanvijay, Jiang Gui @JiangGui, Shafiq Joty @JotyShafiq!

#LLM #AIVerification #TestTimeScaling #FutureOfAI #EnterpriseAI

748

Kudos aan het TOOL-team 👏 Het verhogen van Ethereum naar een hyperscale co-processor is een game-changer. Aan onze kant gedijt schaalinfrastructuur alleen wanneer deze wordt gecombineerd met transparante, controleerbare gegevens over transactieprocessing en prioritering. Zonder dit opent lage-latentie finaliteit de deur naar centralisatie.

0xprincess24 sep, 22:26

1// We zijn trots om de lancering van de TOOL Testnet aan te kondigen!

3,35K

De wet van de verifier is een geweldige lens, Jason. Ben benieuwd wat je denkt over domeinen zoals cryptografie of on-chain records—waar verificatie bijna gratis is, maar de complexiteit van de oplossing explodeert? 💭🔐

Jason Wei16 jul 2025

New blog post about asymmetry of verification and "verifier's law":

Asymmetry of verification–the idea that some tasks are much easier to verify than to solve–is becoming an important idea as we have RL that finally works generally.

Great examples of asymmetry of verification are things like sudoku puzzles, writing the code for a website like instagram, and BrowseComp problems (takes ~100 websites to find the answer, but easy to verify once you have the answer).

Other tasks have near-symmetry of verification, like summing two 900-digit numbers or some data processing scripts. Yet other tasks are much easier to propose feasible solutions for than to verify them (e.g., fact-checking a long essay or stating a new diet like "only eat bison").

An important thing to understand about asymmetry of verification is that you can improve the asymmetry by doing some work beforehand. For example, if you have the answer key to a math problem or if you have test cases for a Leetcode problem. This greatly increases the set of problems with desirable verification asymmetry.

"Verifier's law" states that the ease of training AI to solve a task is proportional to how verifiable the task is. All tasks that are possible to solve and easy to verify will be solved by AI. The ability to train AI to solve a task is proportional to whether the task has the following properties:

1. Objective truth: everyone agrees what good solutions are

2. Fast to verify: any given solution can be verified in a few seconds

3. Scalable to verify: many solutions can be verified simultaneously

4. Low noise: verification is as tightly correlated to the solution quality as possible

5. Continuous reward: it’s easy to rank the goodness of many solutions for a single problem

One obvious instantiation of verifier's law is the fact that most benchmarks proposed in AI are easy to verify and so far have been solved. Notice that virtually all popular benchmarks in the past ten years fit criteria #1-4; benchmarks that don’t meet criteria #1-4 would struggle to become popular.

Why is verifiability so important? The amount of learning in AI that occurs is maximized when the above criteria are satisfied; you can take a lot of gradient steps where each step has a lot of signal. Speed of iteration is critical—it’s the reason that progress in the digital world has been so much faster than progress in the physical world.

AlphaEvolve from Google is one of the greatest examples of leveraging asymmetry of verification. It focuses on setups that fit all the above criteria, and has led to a number of advancements in mathematics and other fields. Different from what we've been doing in AI for the last two decades, it's a new paradigm in that all problems are optimized in a setting where the train set is equivalent to the test set.

Asymmetry of verification is everywhere and it's exciting to consider a world of jagged intelligence where anything we can measure will be solved.

881

Boven

Positie

Favorieten